Evolving Storefront Architecture with Backend-For-Frontend (BFF)

Overview

As storefront platforms expanded across multiple client experiences—desktop, mobile, and native apps—growing performance and development challenges were inevitable.

Each frontend communicated directly with multiple backend services, leading to duplicated logic, inconsistent response handling, and slower feature delivery.

Problem: Fragmented APIs and Frontend Overhead

Performance Issues

- Multiple requests per view (product, cart, user)

- High latency from chatty backend services

- No centralized caching strategy

Architectural Complexity

- Each frontend consumed 4-7

- High latency from chatty backend services

- No centralized caching strategy

DX and Scalability Barriers

- New features required changes in multiple frontends

- Hard to onboard new developers

- Platform-specific bugs increased QA overhead

Without BFF (Technical Analysis)

Let’s say your React or NextJS storefront needs to render a page. You might call something like:

1GET /products/:id

2GET /inventory/:id

3GET /prices/:id

4GET /reviews/:id

5GET /user/:idNow, imagine mobile needs different fields and other transformations. You would either:

- Write branching logic in your frontend, or

- Duplicate data-fetching code between platforms

The better option? Both of them plainly suck.

Challenge

From a recent project (e.g., Clarks, LKQ), our frontends needed to integrate data from:

- commercetools (CT) for product information

- Feature flag services for conditional caching and toggles

- Store configurations for localized selections and attributes

- CMS

Each of these APIs had different structures and latency profiles. Frontend engineers often had to:

- Orchestrate multiple network requests.

- Parse inconsistent data shapes.

- Implement repetitive logic for mapping or filtering data per store.

This makes it difficult to maintain performance and code clarity across different storefronts.

Solution

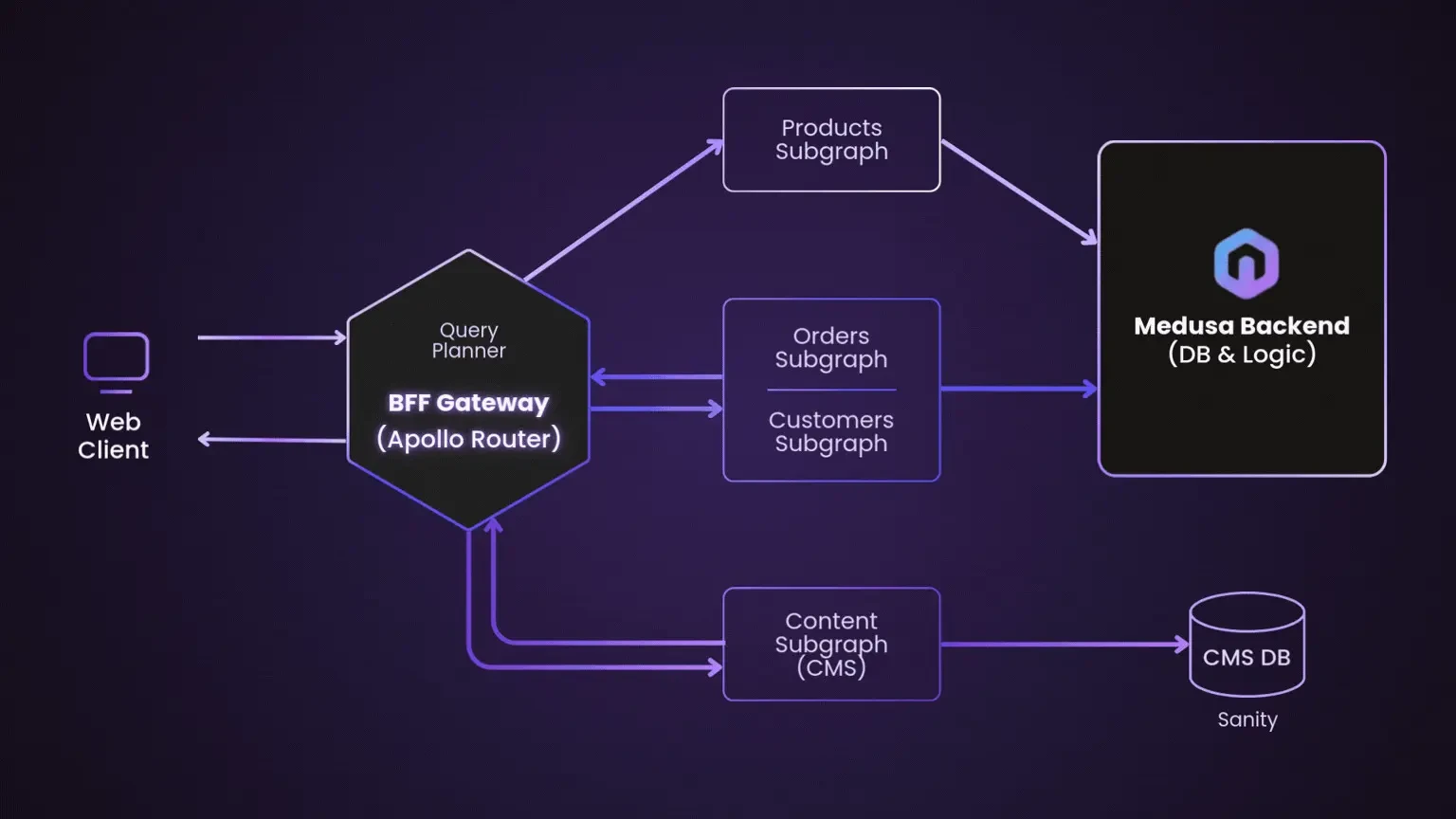

We introduced a dedicated BFF service for each client type. Instead of direct microservice access, frontends now communicate with a single optimized layer.

Rationale - Architecture Before vs After

Before:

Frontend → Product Service

Frontend → Inventory Service

Frontend → Auth Service

Frontend → Pricing Service

After:

Frontend → BFF

BFF → Backend Services (as needed)

With BFF (Technical Analysis)

Instead of the frontend calling multiple services, it hits ONE endpoint:

1GET /bff/product-page/:id

And behind the scenes, BFF does something like this:

1app.get(‘/product-page/:id’,async (req, res) => const productId = req.params.id;

2

3const [product, inventory, price, reviews, user] = await Promise.all([

4 fetch(`/products/${productId}`),

5 fetch(`/inventory/${productId}`),

6 fetch(`/prices/${productId}`),

7 fetch(`/reviews/${productId}`),

8 fetch(`/user/${req.userId}`)

9 ]);

10

11 res.json({

12 ...product,

13 inventory: inventory.quantity,

14 price: price.amount,

15 reviews: {

16 avg: reviews.average,

17 count: reviews.total

18 },

19 user: {

20 canBuy: user.canPurchase

21 }

22 });

23});

24

25Basically, your frontend receives exactly what it needs in one payload, no orchestration.

Key technical changes

- Aggregated multiple service calls into one response

- Instead of 5 or more requests, you need only 1.

- Shapes data to frontend needs

- Mobile does not need the same fields as desktop. BFF adjusts.

- Hides backend complexity

- If backend services are inconsistent or change frequently, your frontend doesn't break.

- Added feature flags and experimentation hooks

- BFF can send different data/layout for experiments.

Implementation:

BFF Layer

Each resolver in the BFF focuses on a single domain. For example, our ProductProcessor handles product-related queries.

Example: ProductProcessor (BFF Layer)

1export class ProductProcessor extends BaseProcessor<QueryProductArgs> {

2 async process() {

3 const { key, locale } = this.event.arguments.input;

4 const CACHE_PRODUCT_ENABLED = await this.featureFlagService.isFeatureEnabled(FlagName.PRODUCT_RESOLVER_CACHE);

5

6 const [[product], assignedProductSelections, storeData] = await Promise.all([

7 ctClient().getProductsProjectionWhere(

8 { where: queryBuilder(`key="${key}"`).build(), cacheProduct: CACHE_PRODUCT_ENABLED }

9 ),

10 ctClient().getProductSelectionByProductKey(key, 'productSelection'),

11 getStoreData(locale),

12 ]);

13

14 if (!product) throw new NotFound(`No product found`);

15 return map(product, assignedProductSelections, storeData, locale);

16 }

17}This function:

- Pulls product data from commercetools.

- Retrieves the store and locale context.

- Applies caching logic based on feature flags.

- Returns a mapped response specifically tailored for our GraphQL schema.

By moving this logic to the BFF, our frontend teams no longer need to worry about backend data inconsistencies or caching rules.

GraphQL as the Communication Layer

Our BFF exposes a GraphQL API using AWS AppSync. Frontend applications interact with this single endpoint through Apollo Client, defining exactly what data they need — no overfetching, no redundant network calls.

Example: getProduct (Frontend GraphQL Query)

1export const getProduct = async (apolloClient, { input }) => {

2 const { data: { product } } = await apolloClient.query({

3 query: GetProductDocument,

4 variables: { input },

5 fetchPolicy: 'no-cache',

6 });

7

8 return { data: product };

9};

10

11This approach:

- Simplifies data fetching: The frontend queries only one endpoint.

- Improves developer experience: Strongly typed queries via GraphQL code generation.

- Reduces latency: Data from multiple sources is aggregated before reaching the frontend.

Key takeaways / Conclusion

- BFF enables better API orchestration: Keep the backend messy but the frontend clean.

- GraphQL bridges flexibility and performance: Let the client dictate what it needs.

- Strong typing and codegen: Type safety across both layers accelerates development and reduces bugs.

- Feature flag–driven architecture: Easy to toggle caching and feature rollouts safely.

About the Contributor

John Patrick AlagarView ProfileAssociate Software Engineer

John Patrick AlagarView ProfileAssociate Software Engineer

Discuss this topic with an expert.

Related Topics

Level Up Shopping: How Gamification Is Transforming E-CommerceHow AI and Playful Design Are Redefining Customer Loyalty

Invisible UX: When the best interface is no interfaceTurns out less really is more.

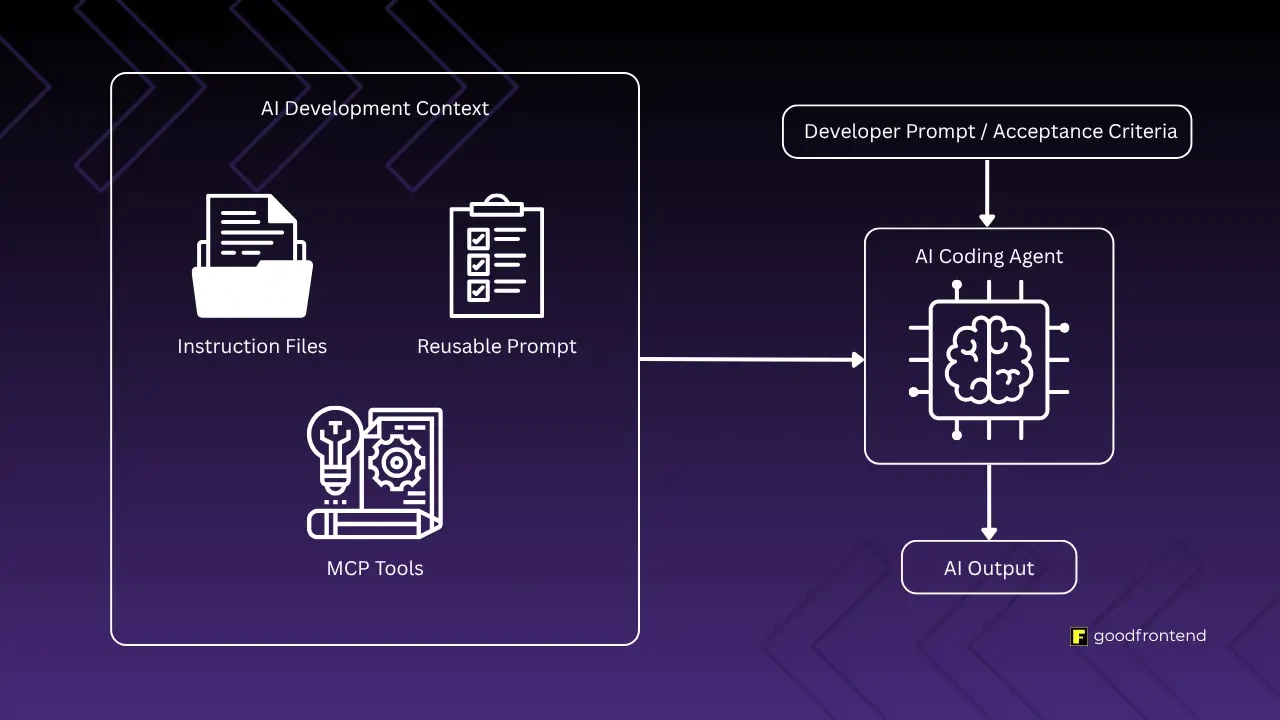

Software Development in the Age of AIAs AI-assisted development becomes the norm, the need for standardized context management systems arises for team workflows.